You may have heard about the "Google Now project" where you give the voice command and Android fetches result for you. It recognizes your voice and converts it into the text or takes the appropriate action. Have you ever thought how is it done? If your answer is voice recognition API, then you are absolutly right. Recently while playing with Android voice recognition APIs, I found some interesting stuffs. APIs are really easy to use with application. Given below is a small tutorial on voice/speech recognition API. The final application will look similar to that of application shown below. The application may not work on the Android Emulator because it doesn't support voice recognition. But the same can work on the phone.

Project Information: Meta-data about the project.

Platform Version : Android API Level 15.

IDE : Eclipse Helios Service Release 2

Emulator : Android 4.1(API 16)

Prerequisite: Preliminary knowledge of Android application framework, and Intent.

Voice recognition feature can be achieved by RecognizerIntent. Create an Intent of type RecognizerIntent and pass the extra parameters and start activity for the result. It basically starts the recognizer prompt customized by your extra parameters. Internally voice recognition communicates with the server and gets the results. So you must provide the internet access permission for the application. Android Jelly Bean(API level 16) doesn't require internet connection to perform voice recognition. Once the voice recognition is done, recognizer returns value in onActivityResult() method parameters.

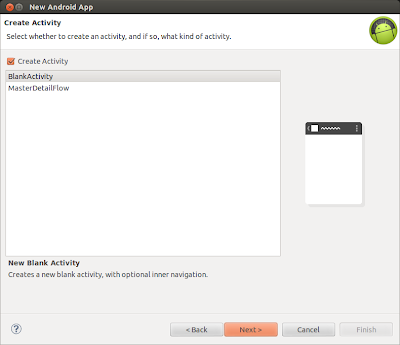

First create project by Eclipse > File> New Project>Android Application Project. The following dialog box will appear. Fill the required field, i.e Application Name, Project Name and Package. Now press the next button.

Once the dialog box appears, select the BlankActivity and click the next button.

Fill the Activity Name and Layout file name in the dialog box shown below and hit the finish button.

This process will setup the basic project files. Now we are going to add four buttons in the activity_voice_recognition.xml file. You can modify the layout file using either Graphical Layout editor or xml editor. The content of the file is shown below. As you may notice that we have attached speak() method with button in onClick tag. When the button gets clicked, the speak() method will get executed. We will define speak() in the main activity.

<LinearLayout xmlns:android="http://schemas.android.com/apk/res/android"

xmlns:tools="http://schemas.android.com/tools"

android:layout_width="match_parent"

android:layout_height="match_parent"

android:orientation="vertical" >

<EditText

android:id="@+id/etTextHint"

android:gravity="top"

android:inputType="textMultiLine"

android:lines="1"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:text="@string/etSearchHint"/>

<Button

android:id="@+id/btSpeak"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:onClick="speak"

android:padding="@dimen/padding_medium"

android:text="@string/btSpeak"

tools:context=".VoiceRecognitionActivity" />

<Spinner

android:id="@+id/sNoOfMatches"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:entries="@array/saNoOfMatches"

android:prompt="@string/sNoOfMatches"/>

<TextView

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:text="@string/tvTextMatches"

android:textStyle="bold" />

<ListView

android:id="@+id/lvTextMatches"

android:layout_width="match_parent"

android:layout_height="wrap_content" />

</LinearLayout>

You may have noticed that the String constants are being accessed from the resource. Now add the string constants in string.xml. This file should look similar to the one shown below.<resources>

<string name="app_name">VoiceRecognitionExample</string>

<string name="btSpeak">Speak</string>

<string name="menu_settings">Settings</string>

<string name="title_activity_voice_recognition">Voice Recognition</string>

<string name="tvTextMatches">Text Matches</string>

<string name="sNoOfMatches">No of Matches</string>

<string name="etSearchHint">Speech hint here</string>

<string-array name="saNoOfMatches">

<item>1</item>

<item>2</item>

<item>3</item>

<item>4</item>

<item>5</item>

<item>6</item>

<item>7</item>

<item>8</item>

<item>9</item>

<item>10</item>

</string-array>

</resources>

Now let's define the Activity class. This activity class, with the help of checkVoiceRecognition() method, will first check whether the Voice recognition is available or not. If voice recognition feature is not available, then toast a message and disable the button. Speak() method is defined here which gets called once the speak button is pressed. In this method we are creating RecognizerIntent and passing the extra parameters. The code below has embedded comments which makes it easy to understand.package com.rakesh.voicerecognitionexample;

import java.util.ArrayList;

import java.util.List;

import android.app.Activity;

import android.app.SearchManager;

import android.content.Intent;

import android.content.pm.PackageManager;

import android.content.pm.ResolveInfo;

import android.os.Bundle;

import android.speech.RecognizerIntent;

import android.view.View;

import android.widget.AdapterView;

import android.widget.ArrayAdapter;

import android.widget.Button;

import android.widget.EditText;

import android.widget.ListView;

import android.widget.Spinner;

import android.widget.Toast;

public class VoiceRecognitionActivity extends Activity {

private static final int VOICE_RECOGNITION_REQUEST_CODE = 1001;

private EditText metTextHint;

private ListView mlvTextMatches;

private Spinner msTextMatches;

private Button mbtSpeak;

@Override

public void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_voice_recognition);

metTextHint = (EditText) findViewById(R.id.etTextHint);

mlvTextMatches = (ListView) findViewById(R.id.lvTextMatches);

msTextMatches = (Spinner) findViewById(R.id.sNoOfMatches);

mbtSpeak = (Button) findViewById(R.id.btSpeak);

checkVoiceRecognition()

}

public void checkVoiceRecognition() {

// Check if voice recognition is present

PackageManager pm = getPackageManager();

List activities = pm.queryIntentActivities(new Intent(

RecognizerIntent.ACTION_RECOGNIZE_SPEECH), 0);

if (activities.size() == 0) {

mbtSpeak.setEnabled(false);

mbtSpeak.setText("Voice recognizer not present")

Toast.makeText(this, "Voice recognizer not present",

Toast.LENGTH_SHORT).show();

}

}

public void speak(View view) {

Intent intent = new Intent(RecognizerIntent.ACTION_RECOGNIZE_SPEECH);

// Specify the calling package to identify your application

intent.putExtra(RecognizerIntent.EXTRA_CALLING_PACKAGE, getClass()

.getPackage().getName());

// Display an hint to the user about what he should say.

intent.putExtra(RecognizerIntent.EXTRA_PROMPT, metTextHint.getText()

.toString());

// Given an hint to the recognizer about what the user is going to say

//There are two form of language model available

//1.LANGUAGE_MODEL_WEB_SEARCH : For short phrases

//2.LANGUAGE_MODEL_FREE_FORM : If not sure about the words or phrases and its domain.

intent.putExtra(RecognizerIntent.EXTRA_LANGUAGE_MODEL,

RecognizerIntent.LANGUAGE_MODEL_WEB_SEARCH);

// If number of Matches is not selected then return show toast message

if (msTextMatches.getSelectedItemPosition() == AdapterView.INVALID_POSITION) {

Toast.makeText(this, "Please select No. of Matches from spinner",

Toast.LENGTH_SHORT).show();

return;

}

int noOfMatches = Integer.parseInt(msTextMatches.getSelectedItem()

.toString());

// Specify how many results you want to receive. The results will be

// sorted where the first result is the one with higher confidence.

intent.putExtra(RecognizerIntent.EXTRA_MAX_RESULTS, noOfMatches);

//Start the Voice recognizer activity for the result.

startActivityForResult(intent, VOICE_RECOGNITION_REQUEST_CODE);

}

@Override

protected void onActivityResult(int requestCode, int resultCode, Intent data) {

if (requestCode == VOICE_RECOGNITION_REQUEST_CODE)

//If Voice recognition is successful then it returns RESULT_OK

if(resultCode == RESULT_OK) {

ArrayList textMatchList = data

.getStringArrayListExtra(RecognizerIntent.EXTRA_RESULTS);

if (!textMatchList.isEmpty()) {

// If first Match contains the 'search' word

// Then start web search.

if (textMatchList.get(0).contains("search")) {

String searchQuery = textMatchList.get(0);

searchQuery = searchQuery.replace("search","");

Intent search = new Intent(Intent.ACTION_WEB_SEARCH);

search.putExtra(SearchManager.QUERY, searchQuery);

startActivity(search);

} else {

// populate the Matches

mlvTextMatches

.setAdapter(new ArrayAdapter(this,

android.R.layout.simple_list_item_1,

textMatchList));

}

}

//Result code for various error.

}else if(resultCode == RecognizerIntent.RESULT_AUDIO_ERROR){

showToastMessage("Audio Error");

}else if(resultCode == RecognizerIntent.RESULT_CLIENT_ERROR){

showToastMessage("Client Error");

}else if(resultCode == RecognizerIntent.RESULT_NETWORK_ERROR){

showToastMessage("Network Error");

}else if(resultCode == RecognizerIntent.RESULT_NO_MATCH){

showToastMessage("No Match");

}else if(resultCode == RecognizerIntent.RESULT_SERVER_ERROR){

showToastMessage("Server Error");

}

super.onActivityResult(requestCode, resultCode, data);

}

/**

* Helper method to show the toast message

**/

void showToastMessage(String message){

Toast.makeText(this, message, Toast.LENGTH_SHORT).show();

}

}

Here is the Android manifest file. You can see that INTERNET permission has been provided to the application because of the voice recognizer's need to send the query to the server and get the result.<manifest xmlns:android="http://schemas.android.com/apk/res/android"

package="com.rakesh.voicerecognitionexample"

android:versionCode="1"

android:versionName="1.0" >

<uses-sdk

android:minSdkVersion="8"

android:targetSdkVersion="15" />

<!-- Permissions -->

<uses-permission android:name="android.permission.INTERNET" />

<application

android:icon="@drawable/ic_launcher"

android:label="@string/app_name"

android:theme="@style/AppTheme" >

<activity

android:name=".VoiceRecognitionActivity"

android:label="@string/title_activity_voice_recognition" >

<intent-filter>

<action android:name="android.intent.action.MAIN" />

<category android:name="android.intent.category.LAUNCHER" />

</intent-filter>

</activity>

</application>

</manifest>

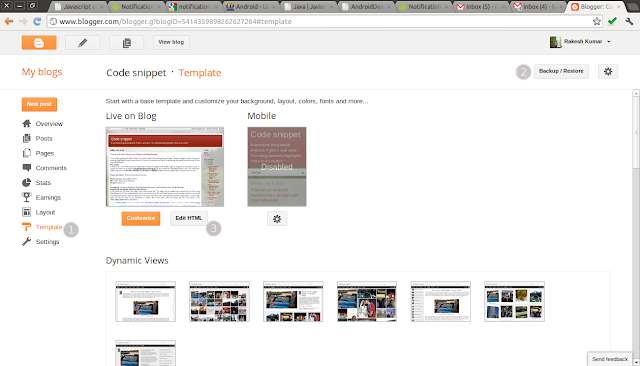

Once you are done with coding then connect the phone with your system and hit the run button on Eclipse IDE. Eclipse will install and launch the application. You will see the following activities on your device screen.If you are interested in the source code, then you can get it from github.

Other links:

"By three methods we may learn wisdom: First, by reflection, which is noblest; Second, by imitation, which is easiest; and third by experience, which is the bitterest."

By : Confucius